Why is employee engagement key to organizational success?

Engaged employees feel empowered, are more satisfied with their jobs, put more care into their work, and provide better service to customers. By investing in employee engagement solutions, you retain top talent, increase productivity, and create business agility.

ThreeWill develops a comprehensive employee engagement solution that is tailored to your unique organizational needs. The platform utilizes four key features:

Topics

- Automatically identify, process and organize company content, resources and expertise

- Organize information into easy-to-find categories across apps and teams

- Reduce time spent looking for information

- Protect valuable resources with built-in security and compliance features

Insights

- Get personalized, actionable insights to understand team performance

- Improve productivity and well-being with data-driven recommended actions

- Preserve privacy with aggregated and de-identified insights

- Stay connected with people regardless of their location

Learning

- Assign learning programs and track completion

- Provide ongoing skill-building with easy access to training content and learning systems

- Access company-wide formal and informal learning content

- Leverage AI recommendations to boost employee performance with the right content at the right time

Connections

- Provide a curated and company-branded modern employee experience

- Find resources with a personalized feed and dashboard

- Create a comprehensive view of resources with customized content for specific roles

- Personalize content feeds for each employee

Curious about what employee disengagement is costing your organization?

Disengaged employees can have a negative impact on the company culture, which can affect employee morale and productivity. They may complain about their job or coworkers, create a negative work environment, and drag down team morale. This negative attitude can also be contagious, and other employees may start to feel the same way, leading to a culture clash that can further exaggerate other costs.

According to the 2019 Gallup report, the average US workforce consists of 17.2% actively disengaged employees; folks who are genuinely unhappy, unproductive, and likely to spread those attributes to others.

Use our new calculator below to find out!

What are the benefits of an employee experience platform?

Promote Employee Well-Being and Retention

Industry studies have shown that companies with highly engaged employees outperform their competitors. They are interested in and passionate about their work because they feel valued and appreciated. This makes them more likely to stay with the organization and recruit other skilled individuals to join the team.

Strengthen Culture and Communications

Seamlessly find and connect people, content, and services in a centralized space. Everyone has the necessary assets to succeed from anywhere, anytime, on any device. Build relationships with bi-directional communication among colleagues, teams, and managers no matter where they are located. When employees are all on the same page, it reduces frustration and increases loyalty.

Build Skills and Increase Innovation

Investing in learning and development encourages creativity, bringing new ideas and innovations to the table. It also brings employees to a higher level where they all have similar skills and knowledge, resolving weaknesses within the company. When employees feel confident and supported, organizations retain top talent and build strong teams.

Address Challenges with Meaningful Insights

Data-driven insights and recommendations help identify stopgaps and drive organizational success. Empower everyone from leaders to managers and individuals with opportunities to improve well-being and workforce efficiency. Balance productivity and well-being to help teams and individuals do their best work.

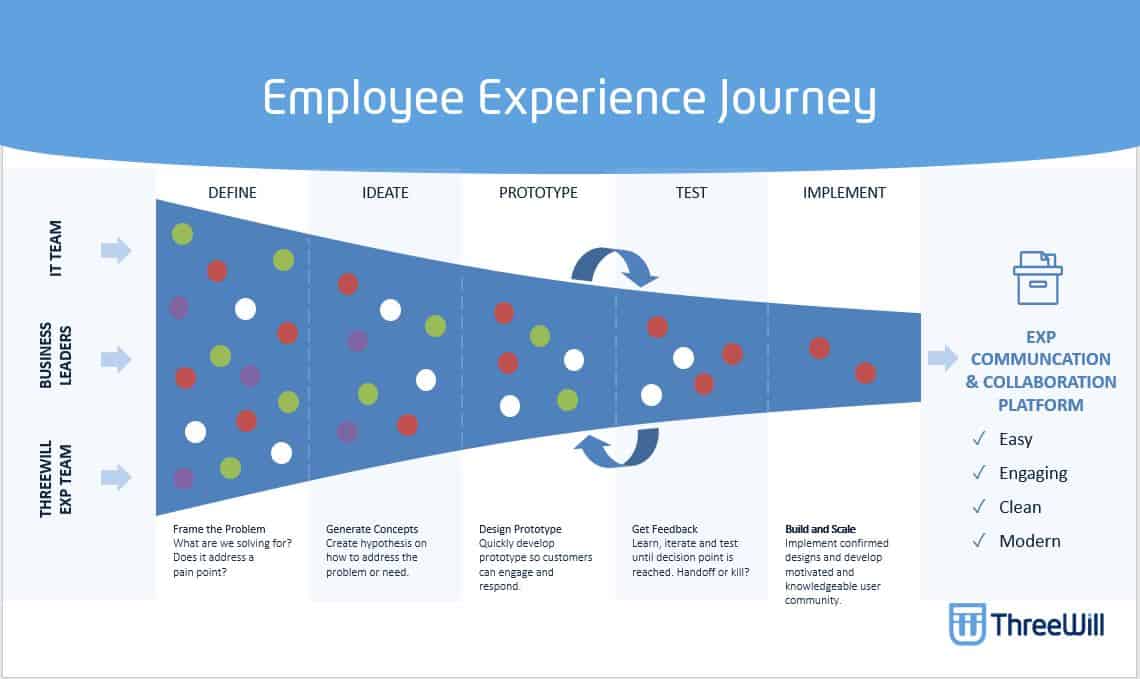

What are the first steps to begin a New Employee Experience?

The first step in your journey to a new employee experience is to “begin with the end in mind” and design your future state, including the creation of a roadmap of key activities and milestones to achieve the business outcomes you have defined.

The journey to an improved employee experience is influenced by your company culture, your individual employees, and your industry. However, all organizations can utilize tools such as optimizers, intelligent intranets, and modernized search systems to build a positive employee experience.

Optimizers

With a range of customizable components to meet every business need, Optimizers increase the value of your intranet.

- Custom search

- Audience targeting

- Training resources

- Social insights

- Reusable templates

Intelligent Intranets

Designed around the user, an intelligent intranet delivers personalized experiences that build community and culture within your organization.

- Manage workspaces

- Bridge disconnects

- Unified sharing

- Personalized content markers

- Enhanced data security

Enterprise Search

With a modern enterprise search system, your team can access everything they need, when and where they need it.

- Harness relevant insights

- Process-driven collaboration

- Enhanced decision making

- Advanced filtering for personalized search

Employee engagement is critical for every business.

Let ThreeWill be your guide to measuring and improving employee engagement to achieve key business outcomes.